AI Voices

RESPEECHER mixes technology and magic to deliver amazing authentic voices across industries

Streamed on

Clients

Respeecher is trusted by industry leaders worldwide

Media

Media

Respeecher makes headlines around the world

Respeecher

Magic

Sound

Use cases

Movies & TV Series

Luke Skywalker

We helped synthesize a young Luke Skywalker's voice for Disney+'s The Mandalorian

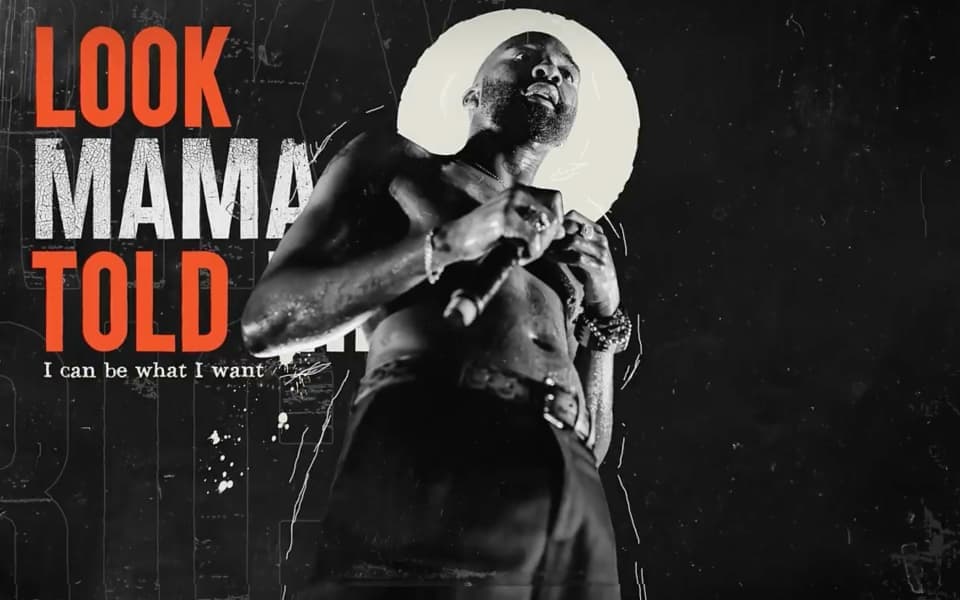

Music

Riky Rick

With our help, Riky Rick Foundation created a track to encapsulate the late South African artist’s unshakeable spirit

Healthcare

Recovering voices

Respeecher's technology enabled patients with speech disabilities to recover their voice

Advertising

Mondelēz & Ogilvy

AI voices helped Mondelēz International, Ogilvy & Wavemaker create a revolutionary campaign for the Indian market

Testimonials

Respeecher also matched my voice to Scott’s professional reading of the Impromptu text. The end result is a nearly perfect audio artifact with amazing intonation. It sounds flawlessly human—though a bit less of a match to “my” voice than the Vall-E recording—and most importantly, similarly didn’t require multiple days of my time to record. If we hadn’t prioritized releasing an early version to show folks what that sounded like, the Respeecher team was confident that with more iterations we could have gotten it near-perfect.

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Applications

Take your projects to the next level with Respeecher's cutting-edge voice solutions

Cross-Language

Voice Cloning

Voice Cloning

Celebrities from around the world support Ukraine 🇺🇦

With Respeecher’s cross-language voice cloning, your voice, too, can make a global impact

Support Ukraine