Many of us remember the good old days of dubbing. The on-screen actor speaks dialog while their lips move unnaturally, pronouncing words in another language.

In addition to being unnatural, classical dubbing had one more drawback. The need to adjust the localized text to the actor's facial expressions often meant changing the meaning of the dialog itself.

In general, this resulted in a less pleasant experience for the viewer. With subtitles, although the actor’s acting is authentic, those who are reading subtitles will not enjoy the performance as the native audience does because they are reading text at the bottom of the screen.

This is where AI and synthetic dubbing technology, known as voice synthesis, come to the rescue, eliminating the need for traditional voice actors and the challenges associated with lip-syncing.

The traditional dubbing process and its drawbacks

The traditional dubbing process is pretty straightforward, albeit challenging to execute.

- First, the producer finds a dubbing studio in a foreign language.

- The producer sends the original video material and the texts for every dialog to the studio.

- The agency starts searching for voice actors (they are often the same people who voice dozens of films in their countries annually).

- Then the complex duplication process begins. Voic actors work in the studio, reading the dialogs to match what is happening on the screen, taking into account the expressions of the original actors.

- The audio directors then mix the new audio track with the video. And voila, the movie is ready to be submitted to local cinemas.

This process has several significant disadvantages, both in terms of viewing experience and production.

- The costs for traditional dubbing are incredibly high. The exact cost is difficult to estimate, but you can reasonably imagine that the price varies from 100 to 150 thousand dollars per language for a film.

- Dubbing is not fast. Although voice acting takes less time than creating original content, the time it takes to complete a proper dub is sometimes measured in months.

- Dubbing overshadows the original acting. We already mentioned this at the beginning of the blog.

AI/deepfake dubbing technology helps to reduce nearly every difficulty introduced by the original approach. At the same time, and this is important, it does not give rise to new intricacies, particularly when guided by principles of ethical voice cloning.

Here's how deepfake dubbing changes the game for good

If you've never heard of deepfakes, you can start learning by reading What Are Deepfakes: Synthetic Media Explained. In short, deepfake technology helps to adjust video and audio content using machine learning technologies.

The most stunning example of automated dubbing and localization is the example of TrueSync. Flawless AI announced its synthetic film dubbing technology that replaces an actor's lips with deepfake-generated lips, showcasing the capabilities of the deepfake generator.

Lip sync has traditionally been the most conspicuous flaw associated with dubbing. It may look fine amongst European languages, but with American films dubbed in Chinese (for example), almost every lip movement is completely out of sync.

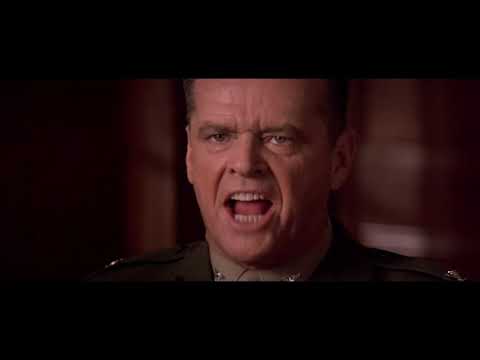

The mouth movements of an English speaker are almost impossible to adapt to Asian languagess, highlighting the challenges that AI dubbing seeks to address. With this in mind, check out what Flawless AI has done:

Hope you enjoyed Jack Nicholson speaking French and German just as much as we did.

So how does AI dubbing work?

In short, a neural network, using the example of an actor's original content, learns to distinguish the characteristic features of their face.

The same network then analyzes the same features in people speaking a different language. Thus, when the foreign language dub is ready, the network can edit the original actor's face to perfectly lip-sync with the foreign dialog.

So far, this technology has only one drawback. To some especially attentive viewers, it seems that modified scenes don't look as essential as the original performances do. But keep in mind that the technology is still young and continues to perfect its methodology.

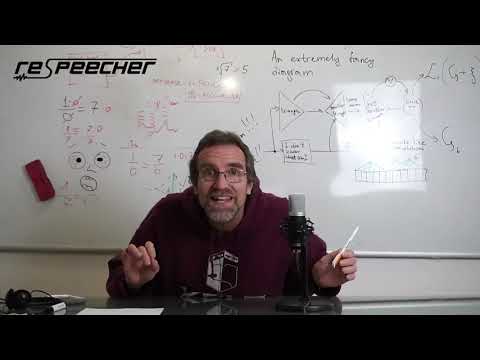

However, voice cloning technology introduces an entirely new set of tools. Respeecher, the leading innovator in AI voice generator technology, allows movie producers and content creators to make anyone sound as if they were someone else.

Check out this demo we recently recorded for folks interested in the technology just like yourself:

In our How Voice Cloning Makes Dubbing and Localization Easier article, we outlined the impact of voice cloning software on the dubbing market.

It's astonishing how much this technology democratizes the dubbing market and lowers the threshold for entry into foreign markets for small studios. Check the link above to learn more about the advancements in voice synthesis.

By combining both technologies, producers can achieve outstanding dubbing quality. The modified facial animation allows for the original voice of the actor to be transferred to another language. Thus, the dubbing voice matches the original actor's facial expressions.

Plus, AI dubbing itself is produced to give the impression that the actor speaks Chinese or Japanese, for example. This means that viewers cannot tell if the actor is speaking in their native language or not.

AI dubbing concerns that you needn’t worry about

The first and greatest concern is the question of the ethical use of the technology. When you can easily simulate the voice of the US President, you must take technology seriously. At Respeecher, we prioritize ethical voice cloning, and we take ethical concerns very seriously.

Our approach emphasizes that we only work with those voices whose original speakers have given their written permission to do so. And only to the extent specified by this permission.

We also watermark the voice generated by our system. Watermarks are not noticeable to the listener, but they easily allow a professional to detect synthesized speech.

In addition, we are constantly working on algorithms that can differentiate between synthetic and original speech, even if it is not watermarked. As with any advanced technology, society will soon adapt it for widespread benefit by minimizing harm, guided by principles of AI ethics. It's simply a question of time.

Another concern is the quality of the synthesized speech and modified picture. Is it really enough to make the modified content indistinguishable from the original? Well, judge for yourself. We have provided you with some examples above. The main argument in favor of its sufficiency is the interest of large Hollywood studios. In recent years, AI and deepfake dubbing technology has been widely used in blockbusters, including Star Wars, The Irishman, and others, while adhering to principles of AI ethics.

Last but not least is the question of cost. Do these new technologies cost a fortune?

Well, here's a quote from Director Scott Mann, co-founder of Flawless: "Our research suggests this will differ on a case-by-case basis. But when Hollywood is likely to pay $60 million to remake Another Round in English, I can safely say this would cost nearly $60 million less."

Well, it can't be said better. The production price is reduced by an order of magnitude.

What about small content producers? We recently launched the Voice Marketplace where any creator can license the original voice of an actor and use it for voice acting and localization at an affordable price.

Conclusion

We hope we've managed to convince you that synthetic film dubbing is worth your attention at the very least. If you want to get more information about our services or get a consultation about using our AI voice generator technology for your project, let us know right now. We will gladly tell you more and help you navigate the price and use cases.